Mind over material: Stimulus realism changes the brain’s response but not in face-selective areas

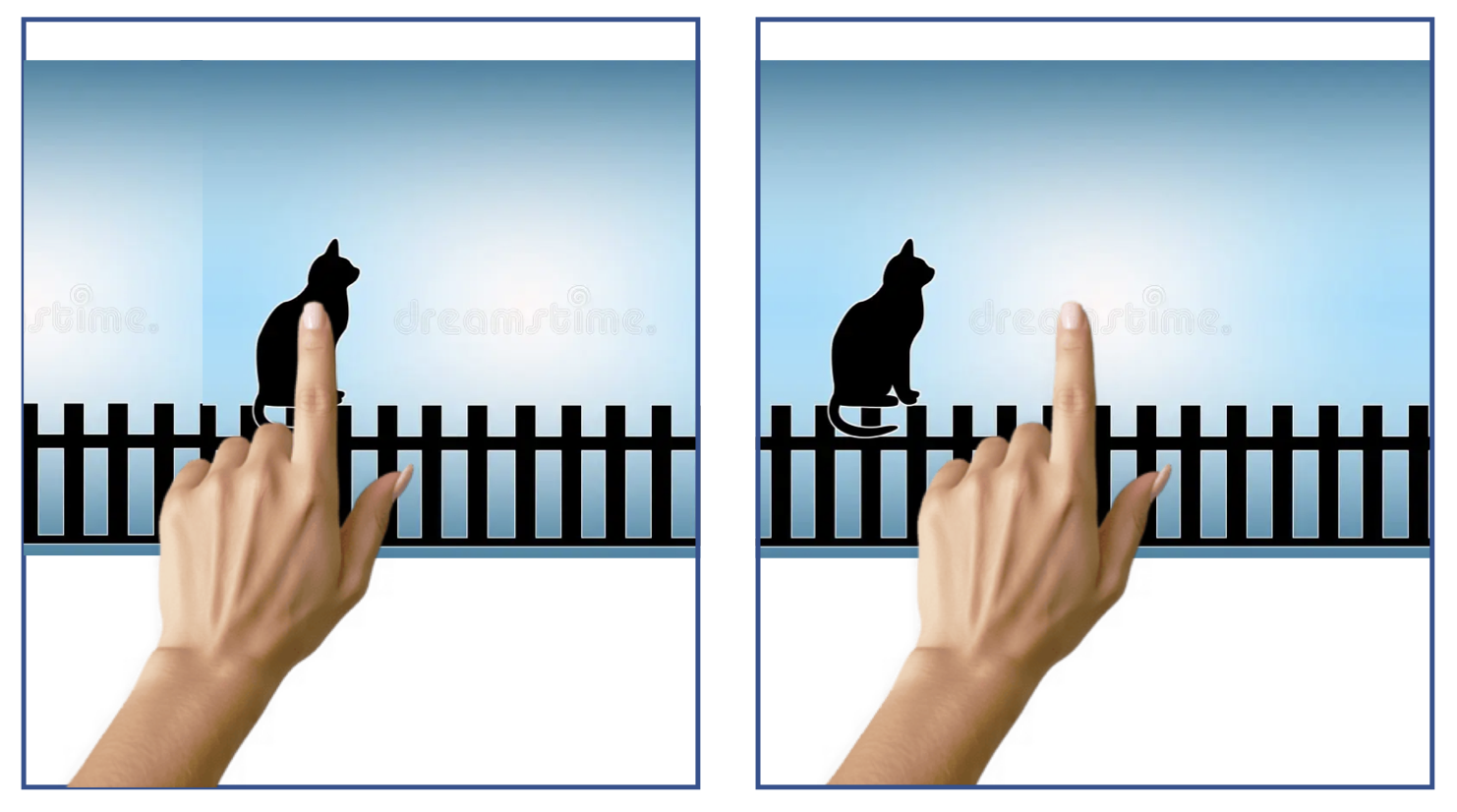

Figure 1. You probably aren’t aware of binocular disparity in your everyday life. Try this exercise to notice this difference in perspective. First, close your left eye. Put your finger out in front of you and use it to cover an object in your field of view. Then, without moving your hand, open your left eye and close your right! You should notice the item jump out from behind your finger. This may seem like a silly trick, but actually, this is an important physiological feature that the brain exploits for 3D vision!

If you’ve taken a research methods course, you probably already know that experimental control is critical for scientific experiments. In order to interpret the results of experiments, we want to be able to say for certain that the only thing that changes between conditions is the variable we are interested in. To establish tight experimental control, most science takes place in a laboratory where every part of the participants’ experience is carefully considered. Experimental control is essential for good science, but in attempts to maximize experimental control, experiments can become more and more distinct from the real-life behaviours that they are trying to investigate. Culham Action and Real-World Imaging Laboratory here at Western University is committed to introducing realism to cognitive neuroscience.

A major aim of Culham’s team is to develop paradigms and stimulus sets that allow participants to have more realistic experiences in the fMRI scanner; this machine enables scientists to examine which parts of the brain are active by tracking changes in blood flow. The commitment to developing realistic experiential paradigms is motivated by emerging work suggesting that when we study behaviour in tightly controlled artificial paradigms, we are missing important effects! Prior work has demonstrated important differences in behaviour and brain activity when comparing images to real objects. It is easier in the lab setting to present participants with pictures instead of objects, but emerging work tells us that it is not the same thing.

Motivated by these prior findings, Eva Deligiannis (a graduate of the Neuroscience MSc program here at Western) compared the brain’s response to 2D photos of faces to the brain’s response to 3D renderings of faces. A major difference when comparing faces seen in real-life to those studied in the lab is that in real-life, we see these faces in 3D, whereas in the lab, we evaluate 2D pictures. Research by Deligiannis and her team investigated whether information available in 3D displays of images would change how the brain responded to pictures of faces.

The first challenge for the team was in the development of the stimulus set. Experimenters took photos of 23 volunteers using two cameras, each positioned 1 meter from the volunteer and angled to face the volunteer. The two cameras mimic two eyes, capturing a picture of the volunteer’s face. For each face, there were two captured images, one capturing the information the left eye would have sampled, and one capturing the information the right eye would have sampled. To manipulate whether each image was 3D or 2D, researchers varied an important depth cue called binocular disparity. Binocular disparity is the difference in the position of the image projected onto the retina from the right eye compared to the left eye. This difference occurs because the eyes are on opposite sides of the face, and this difference in position creates a difference in the position of the retinal image. You won’t notice this subtle difference in your everyday life; try the exercise in Figure 1! This difference is an important part of how the brain perceives 3D. In the current work, by showing each eye a different copy of the same image, the experimenter can control the binocular disparity and can even make the image appear to be in 2D by reducing the binocular disparity to zero.

During the experiment, the participant laid in the fMRI scanner and watched images appear. Some images were faces, and others were objects, scenes, or patterns of dots. The participant was told to pay attention to the images and press a button when an image was presented twice in a row. The researchers first used these images to identify parts of the brain that respond more strongly to faces. They then used patterns of dots shown in either 2D or 3D to locate brain regions that are sensitive to depth cues. Once these regions were identified, the researchers could compare how they responded to faces presented in either 2D or 3D.

Now that they had found regions of the brain that were preferentially activated for faces and for depth, the experimenters were able to look in both sets of regions for differences between 2D and 3D images of faces. First, in-depth selective regions, the authors found differences between the 2D and 3D faces; there was increased activity for 3D faces. This tells us that there are regions of the brain that are able to pick up on the differences in binocular disparity in this stimulus set. Authors speculate that a sensitivity to these kinds of depth cues may be especially important for face stimuli because of the importance of knowing how far away other people are from you in the environment. This is an interesting example of how information we might not think of as immediately relevant (such as depth when evaluating face stimuli) is comported by complex stimuli and is utilized by the brain to guide action.

However, authors were surprised to find that in face selective regions, there was no significant difference between the 2D and 3D faces. This means that in the face selective regions, presenting faces in 2D compared to 3D did not change the activation; for these parts of the brain, pictures were just as good as the more realistic faces in eliciting activation.

The authors explore a couple of reasons that the activation in face-selective areas may not have changed for the more realistic faces. One interesting suggestion is that the task may have been too simple for the 3D differences to matter for face preferential regions. Authors suggest that depth may be a more relevant cue in a more complex task, such as face discrimination across viewpoints. Differences in activation across face preferential regions may emerge in tasks where depth is a useful cue.

So, is it “good enough” to study faces using flat pictures instead of realistic 3D images? This study suggests that, for many simple experimental tasks, the answer is, yes: 2D images did not differ from 3D images in face preferring regions. At the same time, the results of the current study tell us something we may have missed with more artificial 2D stimuli. Depth-selective visual regions do respond to the 3D images more than the 2D images. Simplified lab stimuli may capture the core of face processing, but more naturalistic images allow researchers to see additional brain systems that contribute to how we perceive people in the real world.

By building methods that balance experimental control with realism, this work moves cognitive neuroscience closer to understanding vision as it actually operates in everyday life.

Original Article: Eva Deligiannis, Marisa Donnelly, Carol Coricelli, Karsten Babin, Kevin M. Stubbs, Chelsea Ekstrand, Laurie M. Wilcox, Jody C. Culham; Binocular cues to 3D face structure increase activation in depth-selective visual cortex with negligible effects in face-selective areas. Journal of Vision 2025;25(11):6. https://doi.org/10.1167/jov.25.11.6.